Research Fellows Cat Jones and Daire Healy from the Climate + Co-Centre explore the challenge of evidence overload and how AI can help researchers make sense of it.

Scientific evidence is our strongest defence against misinformation and one of the most reliable guides for policy decisions. In principle, more evidence should mean better decisions and greater confidence. And today, we have more evidence than ever before.

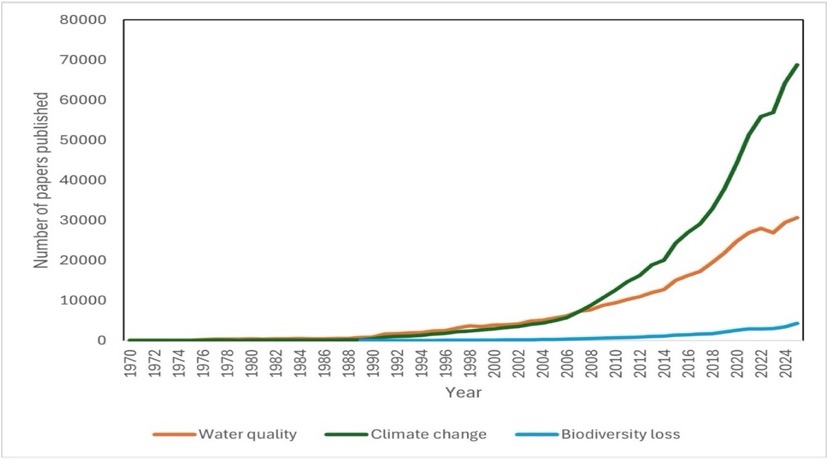

Consider that since 1970, annual publications on climate change alone have grown from a few hundred to nearly 70,000 papers. Research on water quality and biodiversity shows similar dramatic increases.

The figure above shows the number of papers published on the Web of Science database each year since 1970, under the key words ‘water quality’, ‘climate change’, and ‘biodiversity loss’. The data included are derived from Clarivate™ (Web of Science™).

But there is a catch: no human can possibly read it all.

A researcher investigating climate change in 2000 would have faced around 3,000 relevant papers — a substantial task, but one that could plausibly be tackled over several months. Today, the same researcher would confront more than half a million papers, with tens of thousands more published every year.

Keeping up is no longer difficult. It is impossible.

This widening gap between the volume of evidence and our capacity to process it is one of the defining challenges of modern science.

Turning to AI — Carefully

The potential here is significant. AI language models can rapidly process vast bodies of scientific literature, identify patterns, extract key findings, and synthesise insights across thousands of studies in the time it would take a human researcher to read a handful. Used well, they offer a genuine way to transform an overwhelming archive into something navigable.

Our research explores whether these tools can help address this information overload, focusing on evidence related to climate change, biodiversity loss, and water quality across the British and Irish Isles.

But using AI responsibly in this space requires more than speed. It requires trust.

“The goal is not to replace human expertise — it is to free researchers to focus on what humans do best: applying critical judgement, asking better questions, interpreting nuance, and communicating robust evidence to policymakers and the public.”

Dr Cat Jones

To do that, AI systems must be grounded in transparent, verifiable sources.

Teaching AI to Show Its Work

Using large language models to summarise research isn’t as simple as asking them to read a pile of papers and report back. For these tools to function as reliable evidence synthesis tools, they need two things: controlled access to a defined, trusted body of literature, and a clear way to link every statement back to its source.

This is why we are investigating a method known as retrieval-augmented generation, or RAG.

RAG provides a framework for grounding AI-generated answers in traceable evidence. Each paper in the trusted collection is broken into sections and converted into numerical representations that capture meaning, allowing the system to compare ideas and concepts, not just keywords. When a question is asked, the system retrieves the passages most relevant in meaning to the query, and the language model generates its response using only that retrieved material, citing the source text directly.

If no relevant information exists in the literature provided, the system says so.

This last point matters. Rather than drawing on patterns learned from across the open internet, the model is constrained to a defined and transparent evidence base. Domain experts can then review the sources, assess the quality of the synthesis, and apply their own judgement to the findings.

From Overload to Insight

The implications extend beyond academic research. Policymakers, conservationists, and public health officials all face the same problem: critical decisions that depend on evidence no single person can fully absorb. A well-designed AI system, grounded in trusted literature and built for transparency, could help surface what matters most — whether that is emerging risks to a river catchment, shifts in biodiversity data, or new findings on climate tipping points.

Head to the project page to find out more about this research.